The first time I explained to my son, as a preschooler, that cartoon characters were not really real but drawn and animated on a computer, it blew his mind. How could something that looked so realistic actually be fake? I think I now know how he felt; lately, the more I learn about AI-generated photos, the more my brain wants to rebel against the whole notion.

Maybe you’ve heard of the website This Person Does Not Exist, which features realistic (yet totally fake) pictures of people who surely exist in this world and yet don’t. AI (artificial intelligence) generated photos created with computer algorithms have gotten so advanced that it’s becoming harder to distinguish the real from the fake.

This can make for some stellar movie and video game special effects, sure. And we’re normalising the practice of making fake people for other entertainment purposes with websites like Have They Faked Me that tempt us to go in search of our fake doppelgängers.

But in the wrong hands, these algorithms can also be helpful in producing propaganda and other fake media. In a time full of fake news, we’ve had to learn to not always believe everything we read; now, we shouldn’t believe everything we see, either.

[referenced url=”https://www.lifehacker.com.au/2019/05/how-to-tell-if-a-news-site-is-reliable/” thumb=”https://i.kinja-img.com/gawker-media/image/upload/t_ku-large/qsw4n4lul0k223gwqw86.jpg” title=”How To Tell If A News Site Is Reliable” excerpt=”It’s happened before, and with another American presidential election looming next year, it’s going to happen again and again. The spread of “fake news,” the incorrect labelling of real news as “fake,” and overall confusion as to how to tell the difference.”]

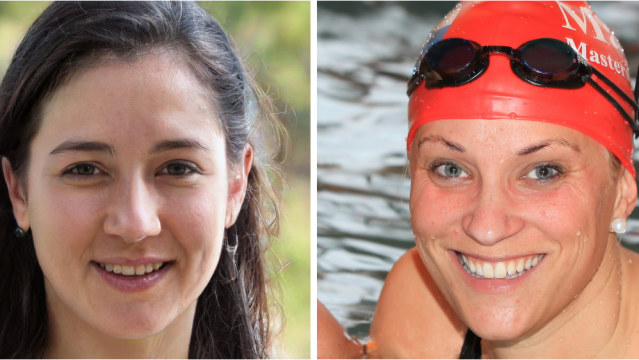

The website Which Face Is Real, created by Jevin West and Carl Bergstrom, aims to teach users to be less trustworthy of photographic images and more critical of potentially synthetic “photos”:

Computers are good, but your visual processing systems are even better. If you know what to look for, you can spot these fakes at a single glance — at least for the time being. The hardware and software used to generate them will continue to improve, and it may be only a few years until humans fall behind in the arms race between forgery and detection.

There are still a few telltale signs that a photo is a fake. Here are the things West and Bergstrom say we should look for before we assume a photo is real:

-

Water splotches. Shiny blobs that look somewhat like water splotches are a distinguishing feature of the current “StyleGAN algorithm” produced by NVIDIA.

-

Weird backgrounds. If the background looks like a torn photo or has strange shapes or other distortions, it might be fake.

-

Asymmetry. Algorithms have all kinds of symmetrical issues, including with facial hair, earrings, fabric and—in particular—eyeglasses.

-

Hair. The fake person’s hair might look too straight, streaked or like it has a halo or glow around it.

-

Fluorescent bleed. Look for bright colours that bleed from the background onto the hair or face of the person pictured.

-

Teeth. This is one of the first things I look at. It’s not just that the teeth look imperfect, it’s that they’re odd (like, three incisors), asymmetrical and almost have a pixilated look in some photos.

[referenced url=”https://www.lifehacker.com.au/2017/09/how-to-detect-bullshit/” thumb=”https://i.kinja-img.com/gawker-media/image/upload/t_ku-large/mujzyk3zwgaictwfeodk.jpg” title=”How To Detect Bullshit ” excerpt=”In this episode we’re talking about bullshit: What it is, how to detect it, and how to call it out. First, staff writer Nick Douglas joins us for a rousing game of “Two Truths and a Lie”. Then we talk to Carl Bergstrom and Jevin West, professors at the University of Washington who teach a course called Calling Bullshit. Finally, Alice talks about why we’re so susceptible to bullshit with staff writer Beth Skwarecki, who writes the Bullshit Resistance School column right here on Lifehacker.”]

For now, West and Bergstrom say on Which Face Is Real, there is still a silver bullet you can use to determine whether a face on the internet belongs to a real person:

Right now, we are unaware of any software that can generate multiple images of the same fake person taken from multiple perspectives. So if you want to be sure that your tinder crush is a real person, insist on seeing two or more photos. If he’s got a headshot and a picture of himself with a tiger, he’s a real person. Of course the person writing to you could be someone other than the person in the picture, but the person in the picture is certainly real.

Ready to test how good you are at spotting a real person versus a fake one? You can take their test here.

Comments