The hardest part of building a PC is picking the parts, especially when everyone around you seems to have an opinion. And no flame war is more prevalent than the NVIDIA snobs vs the AMD fanboys. What’s really going on with these two companies, and which card should you get?

Today’s showdown is going to be a bit different. Instead of directly comparing two graphics cards, we’re going to talk a bit about the companies who make them, where each manufacturer wins and loses, and how to pick the right card for you.

Price-to-Performance Is Always Changing

First, a little real talk: Anyone who tells you one of these companies “sucks” is not to be trusted. They both make unequivocally great video cards for PC gaming, and you should consider both for your next build.

By far the most important factor in choosing a video card is its price to performance ratio. How well does it fare in games against the other cards in the same price range? Neither company wins consistently on a price to performance basis, though at the time of this writing, AMD cards are priced at a particularly good value. But this fluctutates often, and can even vary from card to card.

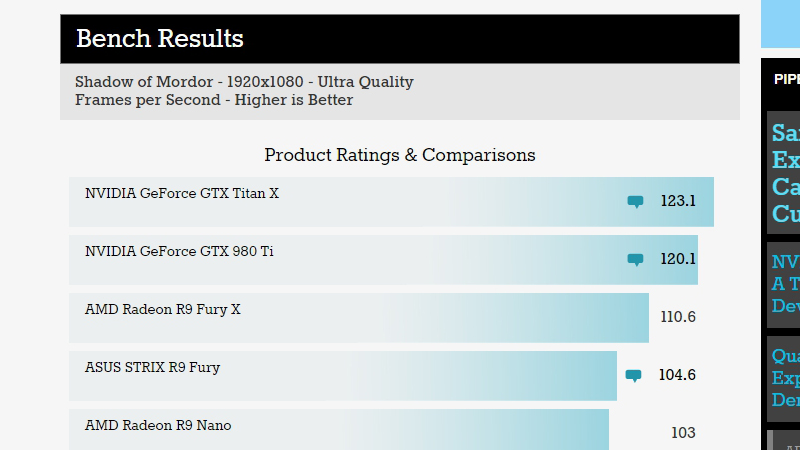

So when you decide on your budget, pick a few cards from each company that fit into your price range and start looking up benchmarks. Anandtech has a good benchmark tool that will show you how each card performs in different games (pay special attention to the games you actually play!) You’ll probably find that one is consistently better for the money. Tom’s Hardware also publishes regular guides to the current crop of cards and which ones are the best for your hard-earned cash, so I recommend checking that out as well.

Each Has Its Own Exclusive Technologies

Price to performance is, by far, the most important trait in which card you pick — however, there are other minor ways NVIDIA and AMD try to stand out from one another.

NVIDIA has some exclusive big-name technologies, like PhysX, which isn’t in a ton of games but provides additional physics effects. In some cases, AMD has equivalent technologies, but NVIDIA is often first to the punch. NVIDIA was the first to launch G-Sync monitors, for example, which adapt the monitor’s refresh rate to the game to eliminate nasty screen tearing. AMD’s Freesync technology is very similar, but didn’t show up until after G-Sync was already on the market. Freesync, however, is more open in nature — which means compatible monitors will be less expensive and (hopefully) more plentiful.

You might also consider NVIDIA’s Shadowplay feature, which records your games to video, or AMD’s GVR, which isn’t quite as powerful but serves the same purpose (and came out a bit later). NVIDIA cards can also stream games to Shield devices, which is pretty cool if you want to play on a handheld device.

NVIDIA isn’t always first on the scene with new tech, of course — AMD came out with its TressFX hair physics before NVIDIA’s HairWorks, and it actually performs better, too. But NVIDIA has a reputation of pushing the envelope a bit more often, especially with big, marketable features, while AMD gains their edge by making their features more “open”.

Scandal After Scandal

Of course, no flame war would be complete without scandals in the press and gamers making half-founded accusations. For example, NVIDIA and AMD recently had a spat over their respective hair technologies. AMD’s TressFX (which actually came out first) creates some very realistic hair, but it works better on AMD cards. NVIDIA’s HairWorks does something similar, but works much better on NVIDIA cards than it does on AMD. So much so that after The Witcher 3 launched, AMD accused NVIDIA of purposely crippling their cards. The two companies sniped at each other for a while, gamers took sides, and the battle still rages on.

And that’s just one example. AMD constantly has a bad reputation for unreliable drivers and cards that run hot. NVIDIA users were upset when they found out that some of the GTX 970’s RAM ran at slower speeds than the rest. Many users cite disappointment over AMD cards that are merely “rebrands” of the previous generations. And current NVIDIA cards won’t be able to take advantage of some upcoming DirectX 12 features that AMD will, causing people to accuse NVIDIA of planned obsolescence.

You can see how the flame wars might get pretty heated.

Very few of these scandals are as simple as one side would have you believe. They may contain useful information, but beware of people who use them to point to the fact that AMD “doesn’t innovate” or NVIDIA is “anti-consumer”. And beware of people making grand predictions of the future based off one benchmark or one marketing campaign. You can never know what the future will bring.

Buy What’s Right for You

When you’re building a PC, asking experienced builders for advice is usually a great idea. But when it comes to video cards, be prepared for some serious brand wars — and don’t let the opinions of others negate your own research.

First and foremost, look at which cards perform best in your budget. If you require any exclusive technologies (maybe you have an NVIDIA Shield, or a Freesync-but-not-G-sync compatible monitor), that may sway your decision as well. And when it comes to the scandals…well, feel free to keep up with the news, but if it all feels like too much, buy the card that proves itself in the benchmarks and price tag. Brand loyalty won’t get you very far.

Comments

5 responses to “PC Graphics Card Showdown: NVIDIA Vs. AMD”

I take it that is a benchmark with just a single card right? Considering getting a 980 or a titan soon. A lot of people say go the 980GTX to me because of the price. Seeing that benchmark it doesn’t makes too much difference then? Except maybe for the extra cores on the titan?

Back in the day you just got the fastest pc, stuck in the latest voodoo and off you went, and now

I am having to freaking read up on G and freesync and whatnot. Confusing. Thanks for clearing a lot up Lifehacker!

Article clearly written by a fanboy without the facts. You can tell it was written by a nTard.

First off, if you are going to bring up physics, and talk about nvidia physX, don’t just say “…AMD has equivalent technologies…”. AMD has HAVOK physics, which when you actually look at complete feature sets is far more rich in technology than the ever old physX. The problem is if you havok, physX dont get a benefit. You go physX, havok loses out. So developers usually pick what they prefer or use none at all. Hopefully with dx12 (or vulkan which will be superior) you can run generic code for physics, and then physX or havok and in turn do their own thing to enhance them thus allowing everyone to win.

Secondly, the way you write about g-sync. You can just read the attitude. Firstly you incorrectly write that “nvidia is usually first to pull a punch” and then reference g-sync. YES, nvidia released their shitty g-sync gimmick before AMD had their own “RELEASED” version. But keep in mind AMD has ALWAYS had the ability to use an “adaptive v-sync”, but AMD felt the users out there didn’t have a “want” for it. And personally, we don’t have a f**king want for it, only cheap skates who buy cheap gpu’s benefit from g-sync, everyone else has a high end card that will get way over the g-sync/freesync limit in fps therefore its not needed for any type of serious gaming rig. Limiting your fps is the dumbest f**king thing any pc user can do, and it seems to be a nvidia thing, and those who jump ship bitched so much about amd not having a limiter that they now include it in the gpu settings control panal…. sigh. plebs.

In that same paragraph you constantly push nvidia, it just rolls off your shitty pleb fanboy tongue.

Then you incorrectly bring up the witcher 3 and the hair problem. Are you really a pc person writing a truthful article? or a paid off pleb? or worse a console tard? or maybe just a fanboy?

The issue with the witcher 3 was that it was built using nvidia’s shitty gameworks pre-built garbage. Its the same f**king technology used back in the day from voodoo on their 3dfx glide, which nvidia happen to acquire in a buyout (google it pleb). gameworks works ONLY on nvidia, period, any kind of amd hardware will result in less than beneficial performance. when a game company happens to use amd’s havok for hair physics, although it wont work on nvidia, it doesn’t cripple your fps in “trying” to make it work via emulation. However, with gameworks, it heavily rely’s on nvidia’s tech, thus when the amd card tries to emulate the gameworks hair bullshit, it can’t, so it sends the shit to the cpu instead, which causes even more issues because it ends up eating all the cpu power. you would know this if you were an actual pc enthusiast.

Then you bring up the biggest piece of pleb fanboy bullshit, “amd is known for bad drivers” bull, f**king, shit. plebs have issues with drivers. ive been running BOTH nvidia and amd hardware since the dawn of both, and NEVER have i had a driver issue. its ALWAYS user error.

Worse you try to bring up amd card running “hot”, or did we forget the timeline of nvidia cards? amd released hot running card, nvidia fanboys scoff and laugh saying “cards should run cool”, amd releases a statement saying the cards were meant to run hot, nvidia fangirls call amd a liar. the very next f**king generation from both sides, amd ran cool and nvidia ran hot. amd kids laughed at nvidia tards for having a hot running card, after all why not rub it in their face like they did to amd fans? then nvidia did their own press release saying “oh, these cards were meant to run hot” and all the nvidia fans go “haha its supposed to run hot, no issues here, amd just sucks, and this generation may run cooler but its slower”. THE F**KING STUPIDITY. Whenever its the “home team” they believe it, whenever its the “away team” its all lies and bullshit. Its f**king pathetic. And from someone who starts their article with “Anyone who tells you one of these companies “sucks” is not to be trusted” yet you constantly bring up bullshit and hearsay like a f**king fanboy yourself.

EVEN F**KING WORSE you bring up nvidia’s 970 issues about memory, AND THEN GET IT COMPLETELY F**KING WRONG. It wasn’t that the 970’s memory was running at different speeds, its that the car should have had 4 f**king gb, but some only had 3.5 and others only 3gb of USABLE memory. You call yourself a f**king pc enthusiast writing this article? You are a f**king joke. WORSE YOU LINK AND ARTICLE WHERE NVIDIA “CLAIMS” THAT IT REALLY IS 4GB OF MEMORY, YET IF ITS 4 GB WHY CAN’T YOU USE IT ALL? AND NVIDIA SPEWS BULLSHIT UP YOUR ASSHOLE AND YOU FALL FOR IT.

Then you bring up “amd re-brands” but forgot that nvidia does re-brands too, quite f**king often, in fact IF you were a pc enthusiast you would know both companies do this. Its not uncommon, the only difference is AMD tells you its a f**king re-brand and nvidia you have to dig deep to find it.

When it comes down to it, this article can be summed up in one f**king sentence….

“Buy the f**king card that fits your budget and use it, because at the end of the day you have X money to use and no matter what you buy some f**ker is gonna tear your head off”.

I mean, I get trying to ACT non sided, but clearly, CLEARLY, this article is written by a small minded fanboy. Both teams are fantastic, get the f**k off the internet…….

lol, holy shit

Not to mention that AMD believes in open standards and NVidia sure as hell shares nothing

i love nvidia its durable, quiet and specially i love the software of nvidia compared to amd. Amd softwares and drivers are cheap just like their amd gpus