Dear Lifehacker, With all the buzz about learning to code, I’ve decided to give it a try. The problem is I’m not sure where to start. What’s the best programming language for a beginner like me? Signed, Could-Be Coder

Dear Could-Be,

That’s probably one of the most popular questions from first-time learners, and it’s something that educators debate as well. You can ask 10 programmers what the best language is to get your feet wet with, and you could get 10 different answers — there are thousands of options. Which language you start with depends not only on how beginner-friendly it is, but also the kind of projects you want to work on, why you’re interested in coding in the first place, and perhaps also whether you’re thinking of doing this for a living. Here are some considerations and suggestions to help you decide.

Why Do You Want to Learn to Code?

Depending on what it is you want to make or do, your choice might already be made up for you. To build a website or web app, for example, you should learn HTML and CSS, along with JavaScript and perhaps PHP for interactivity. If your focus is mostly/only on building a mobile app, then you can dive right into learning Objective-C for iOS apps or how to program with Java for Android (and other things).

If you’re looking to go beyond one specific project or specialty, or you want to learn a bunch of languages, it’s best to start with learning the basic concepts of programming and how to “think like a coder”. That way, no matter what your first programming language, you can apply those skills towards learning a new one (maybe in as little as 21 minutes). Even kids’ coding apps can be useful to start with. For example, the first formal programming course I took (well, other than BASIC back in fourth grade) was Harvard’s CS50, which you can take for free. Professor Malan starts the course off with Scratch, a drag-and-drop programming environment built for kids that teaches coding basics and logic — while helping you create something cool — and then he proceeds to teach you C.

We’ve featured several other excellent resources for learning to code over the years, such as interactive course Codecademy, but even with those you still need to choose which language to start with. So let’s take a look at the differences between the more popular ones and which are most recommended as a starter language.

The Most-Often Recommended Programming Languages for Beginners

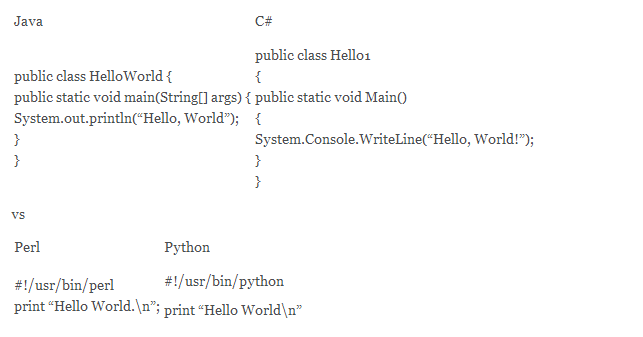

Most of the “mainstream” programming languages — such as C, Java, C#, Perl, Ruby and Python — can do the same — or nearly the same — tasks as the others. Java, for example, works cross-platform and is used for web apps and applets, but Ruby also can do large web apps and Python apps similarly run on Linux and Windows. SOA World points out that because many languages are modelled after each other, the syntax or structure of working on them is often nearly identical, so learning one often helps with learning the others. For example, to print “Hello World”, Java and C# are syntactically similar just as Perl and Python are:

They differ, however, in how easy they are to set up and get into. SOA World continues:

Hey, by the way, if you looked closely at those examples, you’ll notice some are simple, others are complex, and some require semicolons at the ends of lines while others don’t. If you’re just getting started in programming, sometimes it’s best to choose languages without many syntactical (or logical) rules because it allows the language to “Get out of its own way”. If you’ve tried one language and really struggled with it, try a simpler one!

Here’s a quick comparison of the most popular programming languages:

C: Trains You to Write Efficient Code

C is one of the most widely used programming languages. There are a few reasons for this. As noted programmer and writer Joel Spolsky says, C is to programming as learning basic anatomy is to a medical doctor. C is a “machine level” language, so you’ll learn how a program interacts with the hardware and learn the fundamentals of programming at the lowest — hardware — level (C is the foundation for Linux/GNU). You learn things like debugging programs, memory management, and how computers work that you don’t get from higher level languages like Java — all while prepping you to code efficiently for other languages. C is the “grandfather” of many other higher level languages, including Java, C# and JavaScript.

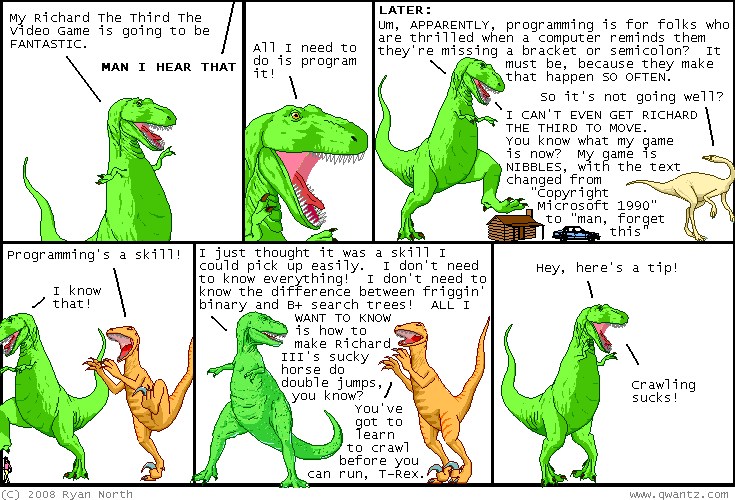

That said, coding in C is stricter and has a steeper learning curve than other languages, and if you’re not planning on working on programs that interface with the hardware (tap into device drivers, for example, or operating system extensions), learning C will add to your education time, perhaps unnecessarily. Stack Overflow has a good discussion on C versus Java as a first language, with most people pointing towards C. However, personally, although I’m glad I was exposed to C, I don’t think it’s a very beginner-friendly language. It will teach you discipline, but you’ll have to learn an awful lot before you can make anything useful. Also, because it’s so strict, you might end up frustrated like this:

Java: One of the Most Practical Languages to Learn

Java is the second most popular programming language, and it’s the language taught in Stanford’s renowned (and free) Intro to CS programming course. Java enforces solid Object Oriented principles (OOP) that are used in modern languages including C++, Perl, Python and PHP. Once you’ve learned Java, you can learn other OOP languages pretty easily.

Java has the advantage of a long history of usage. There are lots of “boilerplate” examples, it’s been taught for decades, and it’s widely used for many purposes (including Android app development), so it’s a very practical language to learn. You won’t get machine-level control, as you would with C, but you’ll be able to access/manipulate the most important computer parts like the filesystem, graphics and sound for any fairly sophisticated and modern program — that can run on any operating system.

Python: Fun and Easy to Learn

Many people recommend Python as the best beginner language because of its simplicity yet great capabilities. The code is easy to read and enforces good programming style (like indenting), without being overly strict about syntax (things like remembering to add a semicolon at the end of each line). Patrick Jordan at Ariel Computing compared the time it takes to write a simple script in various languages (BASIC, C, J, Java and Python) and determined that while the other languages shouldn’t be ignored, Python:

requires less time, less lines of code, and less concepts to be taught to reach a given goal. […] Finally programming in Python is fun! Fun and frequent success breed confidence and interest in the student, who is then better placed to continue learning to program.

SOA says Python is an absolute must for beginners who want to get their feet wet with Linux (or are already familiar with Linux). Python’s popularity is also rising quickly today thanks to wide adoption on popular websites like Pinterest and Instagram.

JavaScript: For Jumping Right in and Building Websites

JavaScript (of little relation to Java) requires the least amount of set up to get started with, since it’s already built into web browsers. O’Reilly Media recommends you start with JavaScript because it has a relatively forgiving syntax (you can code loosely in JavaScript), you see immediate results from your code, and you don’t need a lot of tools. In our own Learn to Code night school we use JavaScript to show you the basics like how variables and functions work. If you want to make cool interactive things for the web, JavaScript is a must-have skill.

Choosing Your Path

One last consideration is whether or not you might want to go from coding as a hobby to doing it as a career. Dev/Code/Hack breaks down the different job roles and the skills you should pick up for them:

Back-end/Server-side Programmer: Usually uses one of the following: Python, Ruby, PHP, Java or .Net. Has database knowledge. Possibly has some sysadmin knowledge.

Front-end/Client-side Programmer: HTML, CSS, JavaScript. Possibly has design skill.

Mobile Programmer: Objective-C or Java (for Android). HTML/CSS for mobile websites. Potentially has server-side knowledge.

3D Programmer/Game Programmer: C/C++, OpenGL, Animation. Possibly has good artistic skill.

High-Performance Programmer: C/C++, Java. May have background in mathematics or quantitative analysis.

In the end, there’s no one way to get started learning to code. The most important thing is to learn the fundamentals through “scratching your itch”, so to speak, with working on a problems you want to solve or something you want to build. As the programming is terrible blog says:

The first programming language you learn will likely be the hardest to learn. Picking something small and fun makes this less of a challenge and more of an adventure. It doesn’t really matter where you start as long as you keep going — keep writing code, keep reading code. Don’t forget to test it either. Once you have one language you’re happy with, picking up a new language is less of a feat, and you’ll pick up new skills on the way.

Once you’ve decided, previously mentioned Bento will suggest the resources you need and the courses to take after you’ve learned your first language.

Cheers

Lifehacker

Got your own question you want to put to Lifehacker? Send it using our [contact text=”contact form”].

Comments

33 responses to “Ask LH: Which Programming Language Should I Learn First?”

I’d recommend Java / C# to start out with. There’s TONNES of resources out there to help you get started, and both have pretty good IDEs to work with, that really assist in the learning process.

I think the main advantage of those 2 languages is they’re kind of a middle tier in terms of learning. They can be used as an introduction to more complex or difficult learning curved languages like C/C++. They’re likely not a easy to pick up as some of the scripting languages, but they do teach you good fundamentals that can be applied across many other languages.

Also Java and C# are fairly interchangeable. Hardcore Java and C# developers just cringed there, but for day to day usage, they syntax is pretty much the same, it’s just the ins-and-outs of some of the more specific or advanced features where things differ.

I’d have to agree with you there. But I’d recommend starting with Java before C#, simply because Java handles memory management for you.

@jacrench, C# is also a managed language. C# & Java are really neck in neck feature wise, the choice between the two really comes down to culture and need.

C# is exactly the same as Java, C and C++ require memory management. Any .Net language will have memory management.

Java will complicate the introduction to programming due to the miss-mash of tools and SDKs. Try grabbing Visual Studio 2012/2013 Express from MS to start with and you’ll be up and running very quickly.

So true, I mean I have never seen a Java program with a memory leak!!!!!!!

I’ve never seen a Java program that wasn’t a sluggish memory hog

Everyone knows Java was invented so companies could hire bad programmers 🙂

C# is a managed environment as well, that’s one of the things they have in common. But I agree, learn Java first, it’ll teach you the fundamentals without complicating it up with pointer/reference differences, malloc problems and overruns that C/C++ is prone to.

@moonhead I’ve been programming in Java for 15 years and C# basically since .NET came out and I agree with you =) Aside from some really nice additions and fixes in C# (it’s a much nicer language than Java), the two languages syntax-wise are basically the same. Learn one, you’ll have 80% of what you need to learn the other.

Well there you go. I didn’t know C# did that. My experience with C only goes as far as C++, I just assumed. Bonus really.

c# doesn’t really have much in common with C/C++, it’s basically Microsoft’s version of Java (again, I suspect I’ve just made both the Java and C# developers of the world cringe by being so simplistic with my comparison).

Except for the fact that both C# and Java handle the memory management for you.

This article sucks, the java and C# examples aren’t using correct indentation and looks like a dogs breakfast. Suggesting one language over another because of ‘all those difficult to use curly braces and brackets’ seems laughable, I guess it’s no wonder there are so many programmers out there who write truly terrible code.

This. If you want to be a good programmer you need to understand what you’re doing, and taking away memory management, let alone proper syntax doesn’t help with that at all. Using higher-level managed languages like C# and Java without an understanding of what you’re doing and why and when the approaches it uses are better is like jumping straight to algebra and trigonometry without learning subtraction, you’ve missed some absolutely fundamental things about programming. Especially if the goal is to learn to “think like a programmer”.

Not saying it’s impossible to pick those things up if you start with Java or C#, but it makes it much harder.

He wasn’t saying memory management was bad, he was correcting Jacrench who implied Java had memory management but C# didn’t. I strongly disagree with the rest of what you said – memory management isn’t a crutch, it’s a tool that takes away the majority of opportunity for the developer to make a mistake. You can’t get buffer overruns or underruns with managed memory, you can’t index memory outside of allocated program space, garbage collection prevents memory leaks.

While you could argue good programmers know how to avoid doing those things, it’s also the case that doing that is 99% a chore that’s only useful in the 1% of cases where the programmer might actually want to tweak memory allocation directly.

There’s a reason why professional development has progressively moved from low level to high level languages since the dawn of programming: understanding the nuts and bolts and working in the lowest levels of the system is great and all, but it’s also by far the least efficient way of doing things. Except in rare and specific cases, nobody wants you tinkering around in the assembly for your program trying to find low level optimisations. These days, the same can be said about spending time managing memory allocations, remembering whose job it is to delete what object where and making sure that across the entire project there isn’t a single missed deletion, boundary overrun or anything else. Optimisation is easier and much faster than that in modern high level languages.

I agree on the codestyle thing though, that is important. Other people need to be able to read your code, and you need to be able to read your code when you come back to it 6 months later.

I have to agree with most of this.

Knowing how memory management is working behind the scenes is useful. Not having to do it manually is probably more so, since in code requiring explicit memory management something like 50% of bugs will be related to memory management – dropped pointers, dereferencing pointers after they are freed, allocating insufficient space for arrays, and other related shenanighans.

Outsourcing memory management to the interpreter or compiler results in code that in general will be a bit slower, but significantly more readable and maintainable.

If I had to prioritise the requirements of a program I would say:

1) It must work.

2) It must be understandable by somebody without significant prior familiarity with the code; that is, it must be maintainable.

3) It should work as efficiently as possible.

There are applications where (3) becomes more important than (2), but I can’t think of any case where having highly efficient code that does not work is advantageous.

It is possible to write readable code in any language (with some odd exceptions like APL), just as it is possible to write unreadable code in any language. But some languages make it easier.

Personally I would suggest starting with something like Java or Python then learning C or some other low-level language later on. Python has the almost unique advantage that it syntactically enforces a layout convention.

Hey guys, here’s a tip: please don’t comment if you don’t know the languages you’re attempting to make “factual” statements about. If you don’t know what you’re talking about, just let the grown ups do the talking instead.

Was going to come in and say just that.

Depends what you want to do really – if your goal is to do web development I’d say start at Javascript, with node.js, you may never need another language. Although I do recommend a strongly typed language for a learner.

If you want to do general purpose development, personally I recommend starting with C#, I am biased – I’m a professional ,NET dev.

My rationale for C# as a first general purpose language is that you can use it for anything on any platform, especial if you want to do mobile, Xamarin & Unity are both .NET/Mono based.

On the other hand while you can do almost anything for free with .NET, the fact that the top tools are all paid for is understandably a hurdle for some.

I’m a software engineer by trade.

I’d recommend Java as a starting point to learn basic programming concepts – it’s a lot easier to write a program because the compiler does so many nice checks for you (and there’s no unexplained segfaults!).

Then move on to C/C++ to learn the lower level functionality (i.e. memory management, linking+compiling, pointers).

I would even suggest a look at instruction based programming emulator (when I went through Uni they had a DLX emulator which made you write programs as processor instructions and let you directly play around with memory addresses and processor registries. It helped me better understand how pointers and memory management worked).

Then once you have that solid core of knowledge you can move onto the lazy, relaxed, type-less, interpreted languages like JavaScript/PHP.

My old uni has moved to starting its CS degree with JavaScript in stead of Java, and then moving to C/C++ because they feel that Java is becoming irrelevant (it’s not like there’s any huge platforms that support Java *cough*android*cough*).

IMO that’s a TERRIBLE idea because it teaches SO many bad habits and makes it so hard to move to a compiled language.

TLDR;

Java to C/C++ to whatever you want to do with your life:

Web Developer: PHP/JavaScript/HTML/CSS/SQL for startup web development, C#(ASPX)/JavaScript/HTML/CSS/SQL for big business web development – good knowledge of network protocols is recommended.

Web Designer: HTML/JavaScript/CSS – good design/art skills required.

Games: C/C++, OpenGL, UnrealScript – needs a strong aptitude in maths (esp for engine programming and design).

Research: Any language will suit, a strong background in maths is recommended, need to have a way with words to write and ‘sell’ your research papers to journals.

If your old uni thinks Java is declining, they have zero awareness of the business world whatsoever. Which fits with the problem universities have with fast-moving subjects like computing, they’re always well behind the curve. You don’t need a uni degree to do professional programming, it just helps to get the interview.

Actually, the experience from OP, and your own first sentence, refutes your second claim. If universities were behind the curve, then the “data” that Java is falling out of favor would be ahead of the curve. This isn’t really about universities being behind the curve, it’s about one university being completely wrong. Universities may be behind the curve (some are, some aren’t), but that really isn’t the issue with OP’s point. If anything, it seems like his university is trying (and failing) to be ahead of the curve by teaching JS first.

As far as degrees go: it’s not the piece of paper that you get from an education, it’s the knowledge and experience. You CAN get that from anywhere, but it’s a lot easier to get them from a place that will motivate you (forcibly) to get it. Unless you are extremely passionate about learning on your own, formal education helps a great deal. On another note, as a professional developer who interviews candidates, I can tell you that we don’t judge candidates by their degree when offering interviews/phone screens, but candidates with formal experience tend to do much better in interviews than those without the fundamentals. The real lesson is that if you do want to learn on your own, you have to pick up the fundamentals (data structures, algorithms). If you don’t know at least three different types of sorting algorithms, and have never done tree traversal before, you still have a lot of learning to do.

A lot of companies don’t like to use Java because the security teams read about the potential holes that some of the bugs/how Java is made and it scares them into using other technology.

Also a lot of bigger companies work very heavily with Microsoft products, and they don’t care for cross platform because of their SOE, so they lean toward using C/C#.

In terms research (which the CS degree is geared toward), Java isn’t that widely used because it’s comparatively slow to your natively compiled languages (starting up and running the JVM is costly).

I 100% agree that you don’t need a degree to learn programming, but by going to Uni gives you access to resources that aren’t readily available outside of it (i.e. professors who really know their stuff). And generally the course plan for the degree is designed to teach you basics and then layer the knowledge in a logical way. Plus as part of my degree, they had a few classes on public speaking, interview techniques, resume writing, and also numerous software engineering classes (writing documentation, project planning and execution, etc)

You can’t get that sort of strong knowledge and experience base just by learning on your own or by doing a single language programming class at a TAFE/Learning institute.

Plus having access to professors that are on the forefront of research (my Uni was very heavily into computer vision whilst I was there), you get to see and learn some really cool stuff.

These are all “technical” languages, but I think a beginner would get far more out from learning something like Visual Basic for Applications (VBA) for mucking about in Excel, Word and Access. Pretty much any work environment in an office uses MS Office these days and VBA proficiency goes a long way in getting a job in an office.

VBA is horrible to “learn” though. The actual environment you’re working in is clunky and very poor for beginners. It’s actually quite powerful (you almost have the full power of Visual Basic 6.0), but when comparing to an environment like Visual Studio or Eclipse it’s not great for beginners.

Also VBA encourages some very bad practices, not a huge issue if you’re an office worker using Office and VBA for automation, but if you’re wanting to become a professional developer, it may lead you down some very wrong paths…

VBA can’t short circuit conditionals. Any language that can’t do that goes straight in the bin as far as I’m concerned =)

Start with javascript because the syntax is very forgiving and familiar (handy when you want to try c,c++,php,java,c#,etc later on), you don’t need to install anything to compile/run it and you’ll be able to do a lot, you won’t need to worry about memory allocation, and you can easily share what you make with friends and run it on any phone

Then go for python or C++ when you want to make stuff that uses hardware more

Forgiving syntax is not necessarily a plus when learning to program. Unforgiving syntax teaches attention to detail, which is one of the more essential programming skills.

You’ll spend your first six months swearing at yourself and the compiler/interpreter about missed semicolons, and the remainder of your career overwhelmingly grateful that you can spot minor errors at a glance.

Not all minor errors are syntax errors. The distinction in C between = and == is easy to miss, for example.

Concepts are more important than syntax, syntax changes with every language, it’s better they understand concepts like expressions, branching, looping, functions and scope to be able to break processes down into steps.

It is much harder to find logic errors in code, because the code compiles/runs but doesn’t execute properly. It requires more attention to detail to realise what’s actually wrong with your code rather than remembering your semicolons.

Using = instead of == is a logic error, and you’d get the same behaviour if you tried that in JS.

It is EASY to find syntax errors because the compiler will tell you where it is and why it can’t read your code. You can worry them with semicolons, segfaults later if they actually want to program serious things in another language.

JS is forgiving because it won’t bother you with missing semicolons, or segfaults (it’ll just tell you the value is undefined and the loop will mess up a lot), and being nontyped allows variables to be flexible which is fine because they don’t need to know what’s happening in memory just yet, all they need to know is there are numbers, strings and objects for now, which means the learning curve is shorter and they can start writing code that does stuff much sooner.

@bones: your argument seems logical and well intentioned, but experience dictates that this doesn’t work in practice. The problem with your hypothesis is you’re assuming that a beginner can differentiate concepts from syntax. The reality is most beginners cannot.

A relaxed syntax causes beginners to learn bad habits and then associate those bad habits with the concepts they are learning. Here’s a really basic one: indexing in JS does not raise bound errors, which means beginners don’t have to deal with checking bounds– this however is one of the biggest mistakes beginners can make, because most beginners will already run into bound checking issues at least once along their path. The stringent checks in languages like Java force beginners to think about the boundaries of their data structures, allowing them to learn and focus on fixing those issues more quickly. There’s an underlying concept to bounds checking that drops down to the very nature of lists as data structures. If the syntax of your language is completely ignorant of that concept, that will leak into your understanding of said concepts.

Experience shows that you should teach form first. You can look at sports, music, and pretty much any other trade for examples. Before you start punching your sparring partner in boxing, you spend time learning footwork. Before you ever play a single song on piano, your teacher will run you through weeks of playing “do re mi …” in multiple keys. You aren’t learning concepts of music or boxing, you’re just learning the syntax of these art forms. How to move your feet around the ring, how to move your fingers on the instrument. That way once you start learning concepts, you won’t get sloppy and hurt yourself. Incidentally, many piano players and musicians tend to get carpal tunnel, and typically this is caused by bad form. People really do get hurt when they don’t understand syntax.

If they don’t check array bounds in JS, their code just won’t work as expected, they’ll just get undefined values which will ripple through the code, they will still need to be aware of array boundaries to get desired output, so where’s the problem?

Can you give me a better example?

It’s not like JS is a broken language, you just don’t need to worry about compilers, environmental variables, segfaults and all the other potential stumbling blocks that would scare away the curious.

JS is a simple but powerful language, and that is why it is good for beginners and pros.

If you add a number and a string, JS will just concatenate them, which is simpler and makes sense (which is why python, php and others do it as well). If they decide to learn C or Java later and try that, they’ll just find out they have to use (or write) a separate function to do the same thing. They’ll have to break the habit, problem?

They can pick up bad habits in any language. What if someone wrote every loop as recursion because their first language was haskell, what if someone made all their variables global? what if someone gets totally stuck with pointers because they started with java? What if someone keeps forgetting to write braces because they started with python? syntax to increment is different in many languages

If they keep coding, they will encounter different things that will require them to change their programming style, that’s why programming is very different from other disciplines, you really have to be flexible, and getting rigid syntax out of the way is a good step towards that.

If you’re just learning for shits and giggles, pick a language that teaches you the basics without causing a brain annuerism. If you’re managing pointers and memory allocations in 2013 as a first language, you are mad. Things have moved on since then.

I’d pick something like Ruby or Groovy where you get a full language that teaches you the basics (fundamental data types, flow control, abstract structures, design patterns) as well giving you functional software with the minimum of setup and maximum of customization.

If you’ve ever coded in C#, as a novice you’ll quickly figure out- its Microsoft’s way or the highway.

With Ruby and Groovy, you can do whatever you want, however you want. Nobody is going to yell at you.

Most importantly: your first program is going to be a mess.

So is your second one.

And your third.

You will get better.

Years down the line, you’ll meet someone with actual skill and they’ll take you under their wing.

Then you’ll become great.

Then a couple of years after that, you’ll realise you still really suck, and you were wrong to thing you were great.

Then you’ll meet someone who teaches you its not about learning and amassing amazing quantities of knowledge, its about rolling with it and being flexible.

And then you wont care and you’ll enjoy coding in many languages- and oddly, your code will be simple, efficient, maintainable, sustainable, easy to test and enjoyable to write.

It can take an entire career to get this point, or a couple of years.

Depends on the person and their experiences.

Back in my day, there was BASIC and COBOL. Both still worth a shot if you’re just getting started. Sure they are dated but there’s a reason they were so ubiquitous 30 years ago, simple, lightweight, and easy to learn.

Whatever language you choose, unless you’re extremely motivated and want to spend a few days learning the interface and syntax instead of how to code, spend some money and do a class. Even a short night course will give you a solid basis for future self-directed learning.

A lot of people miss that learning a language (syntax, expressions etc) is secondary to learning to think logically – breaking down systems into I/O, reproducible problems into loops etc. This is where a lot of people (and courses) break down, because they jump headlong into ‘code monkeying’ without actually explaining how to approach a problem programmatically. I’ve been a freelance web developer (PHP/Java/C#) for 6 or so years now, and that was the biggest thing I had to learn to deal with.

As others have said it really depends on your motivation for learning to code. You are never going to be a good coder without doing a lot of coding. If you are trying to learn in your spare time, just for interest then you should pick a project that you find fun and then choose the appropriate language based on that. Something like Unity http://unity3d.com is extremely fun and free and gives you the opportunity to learn C# and C/C++. Maybe programming Mindstorms robots with Robotc?

Unity uses C# and a form of Javascript

No C/C++

Open University Australia – CPT 120 “Introduction to Programming” is using… Jython.

What the hell is that, you ask?

It’s a Python-like clone, built on Java, that has a command line and file creation rolled into one program. It hides a LOT to focus on creating if-then type programs [example: saving a .jpg offers no controls over jpeg quality] but you can quickly get into opening editing and saving pictures, sound etc.

Simple enough starting point. Just be ready for a shock, when you try to do the same in proper Python. Or Java, C#, etc.

You can develop mobile APPs for both Android and iOS using Lua. Look up Corona SDK or Gideros Mobile. They are development platforms that use Lua. You build the APP and then deploy to bothtyoes of phones.

I find Lua works best as an exposed scripting language for creating behaviour in a larger program, rather than as a full fledged language itself. For learning, a strongly typed language is I think a must.

Learning a language is one thing but learning to write good code is another matter entirely. Unfortunately most books / tutorials seem to skip this part.

The free course Introduction to Systematic Program Design on Coursera will teach you to think like a programmer. They use a learning language (a student version of Racket which is part of the Lisp/Scheme family).

It’s only 10 weeks (give or take) – with some prior experience and you will probably finish sooner. You will have developed some excellant habits and then you can take your pick of languages. Depending on what exactly you want to do you will find them much easier to learn.

If you actually want to program properly, watch computing lectures on youtube

Go for the ones by Richard Buckland of UNSW

MATLAB !!!!!!!!!!

The language isn’t too important at the very beginning stages and I would personally recommend a language like Python, Ruby or Visual Basic at the start as they are much more accessible than the likes of c or java. What’s important at the embryonic stages is to learn to concepts of coding. When you know those then the languages are relatively easy to muster. For any wannabe coders I recommend http://www.visualbasictutorial.net. It introduces the core concepts of coding in laymen’s terms and is ideal for the absolute beginner. Whatever path you choose, enjoy every second of it. It’s a great move.

Taking up a degree in Physics (probably focusing on quantum), but I feel as though knowing some computer science is a useful skill. Should I just be looking at the ground level of things? Like binary and circuits?

I’m a front-end programmer, and I’d like to start dabbling in 3-d (for web). What would you recommend would be a good starting point?

Javascript and WebGL

There are countless tutorials

I’m an SEO working from home and would like to move to programming. I’ve made a few simple tools using PHP with curl, and C# with webrequests. I’m confused on how to make the move. I guess I’m wandering if it is possible to work as a programer from home.